Hadoop 2.x release involves many changes to Hadoop and MapReduce. The centralized JobTracker service is replaced with a ResourceManager that manages the resources in the cluster and an ApplicationManager that manages the application lifecycle. These architectural changes enable hadoop to scale to much larger clusters. For more details on architectural changes in Hadoop next-gen (a.k.a. Yarn), watch this video or visit this blog.

This post concentrates on installing Hadoop 2.x a.k.a. Yarn a.k.a. next-gen on a single-node cluster.

Prerequisites:

- Java 6 installed

- Dedicated user for hadoop

- SSH configured

Steps to install Hadoop 2.x:

1. Download tarball

You can download tarball for hadoop 2.x from here. Extract it to a folder say, /home/hduser/yarn. We assume dedicated user for Hadoop is “hduser”.

$ cd /home/hduser/yarn $ sudo chown -R hduser:hadoop hadoop-2.0.1-alpha

2. Setup Environment Variables

$ export HADOOP_HOME=$HOME/yarn/hadoop-2.0.1-alpha $ export HADOOP_MAPRED_HOME=$HOME/yarn/hadoop-2.0.1-alpha $ export HADOOP_COMMON_HOME=$HOME/yarn/hadoop-2.0.1-alpha $ export HADOOP_HDFS_HOME=$HOME/yarn/hadoop-2.0.1-alpha $ export HADOOP_YARN_HOME=$HOME/yarn/hadoop-2.0.1-alpha $ export HADOOP_CONF_DIR=$HOME/yarn/hadoop-2.0.1-alpha/etc/hadoop

This is very important as if you miss any one variable or set the value incorrectly, it will be very difficult to detect the error and the job will fail.

Also, add these to your ~/.bashrc or other shell start-up script so that you don’t need to set them every time.

3. Create directories

Create two directories to be used by namenode and datanode.

$ mkdir -p $HOME/yarn/yarn_data/hdfs/namenode $ mkdir -p $HOME/yarn/yarn_data/hdfs/datanode

4. Set up config files

$ cd $YARN_HOME

Add the following properties under configuration tag in the files mentioned below:

etc/hadoop/yarn-site.xml:

yarn.nodemanager.aux-services mapreduce_shuffle yarn.nodemanager.aux-services.mapreduce.shuffle.class org.apache.hadoop.mapred.ShuffleHandler

etc/hadoop/core-site.xml:

fs.default.name hdfs://localhost:9000

etc/hadoop/hdfs-site.xml:

dfs.replication 1 dfs.namenode.name.dir file:/home/hduser/yarn/yarn_data/hdfs/namenode dfs.datanode.data.dir file:/home/hduser/yarn/yarn_data/hdfs/datanode

etc/hadoop/mapred-site.xml:

If this file does not exist, create it and paste the content provided below:

mapreduce.framework.name yarn

5. Format namenode

This step is needed only for the first time. Doing it every time will result in loss of content on HDFS.

$ bin/hadoop namenode -format

6. Start HDFS processes

Name node:

$ sbin/hadoop-daemon.sh start namenode starting namenode, logging to /home/hduser/yarn/hadoop-2.0.1-alpha/logs/hadoop-hduser-namenode-pc3-laptop.out $ jps 18509 Jps 17107 NameNode

Data node:

$ sbin/hadoop-daemon.sh start datanode starting datanode, logging to /home/hduser/yarn/hadoop-2.0.1-alpha/logs/hadoop-hduser-datanode-pc3-laptop.out $ jps 18509 Jps 17107 NameNode 17170 DataNode

7. Start Hadoop Map-Reduce Processes

Resource Manager:

$ sbin/yarn-daemon.sh start resourcemanager starting resourcemanager, logging to /home/hduser/yarn/hadoop-2.0.1-alpha/logs/yarn-hduser-resourcemanager-pc3-laptop.out $ jps 18509 Jps 17107 NameNode 17170 DataNode 17252 ResourceManager

Node Manager:

$ sbin/yarn-daemon.sh start nodemanager starting nodemanager, logging to /home/hduser/yarn/hadoop-2.0.1-alpha/logs/yarn-hduser-nodemanager-pc3-laptop.out $jps 18509 Jps 17107 NameNode 17170 DataNode 17252 ResourceManager 17309 NodeManager

Job History Server:

$ sbin/mr-jobhistory-daemon.sh start historyserver starting historyserver, logging to /home/hduser/yarn/hadoop-2.0.1-alpha/logs/yarn-hduser-historyserver-pc3-laptop.out $jps 18509 Jps 17107 NameNode 17170 DataNode 17252 ResourceManager 17309 NodeManager 17626 JobHistoryServer

8. Running the famous wordcount example to verify installation

$ mkdir in $ cat > in/file This is one line This is another one

Add this directory to HDFS:

$ bin/hadoop dfs -copyFromLocal in /in

Run wordcount example provided:

$ bin/hadoop jar share/hadoop/mapreduce/hadoop-mapreduce-examples-2.*-alpha.jar wordcount /in /out

Check the output:

$ bin/hadoop dfs -cat /out/* This 2 another 1 is 2 line 1 one 2

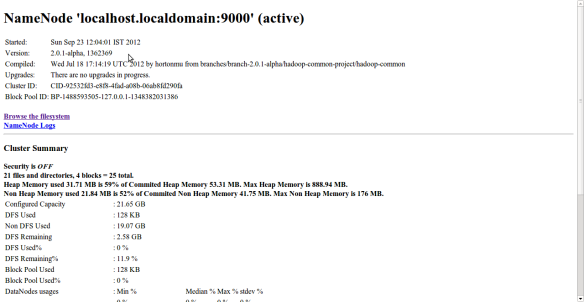

9. Web interface

Browse HDFS and check health using http://localhost:50070 in the browser:

You can check the status of the applications running using the following URL:

10. Stop the processes

$ sbin/hadoop-daemon.sh stop namenode $ sbin/hadoop-daemon.sh stop datanode $ sbin/yarn-daemon.sh stop resourcemanager $ sbin/yarn-daemon.sh stop nodemanager $ sbin/mr-jobhistory-daemon.sh stop historyserver

Happy Coding!!!

Pingback: Steps to install Hadoop 2.x release (Yarn or Next-Gen) on multi-node cluster | Rasesh Mori

Thanks a lot! This is the best resource I found for latest Hadoop release. Others were outdated. Faced an issue that hostname is not found when formatting data node. Using other internet resources found that “hostname -fsqn” is giving error. So used yast>network settings>hostname/dns>assign hostname to loopback ip – checked the box. Took a long time to find it, as I am a linux new bie.

Happy to help!!! 🙂 I guess your problem was related to entry in /etc/hosts file. Isn’t it?

Yes. that just added a new entry into /etc/hosts with my machine name.

Hi,

Nice. I am able to run jobs on my local machine.

I am just wondering where are the job history files located. Do you have idea about this. Can this location configured in the hadoop 2.0.

Hi,

The job history tasks are usually located at localhost:19888.

However if you look at the console output when you run a MapReduce job you should see the link for the job results there.

For example:

The url to track the job: http://localhost:8088/proxy/application_1384700761740_0001/

Regards,

Dan

Excellent tutorial! I just followed, it went well without any error . able to run word count example

Thanks. Happy if it helped you.

starting “secondarynamenode” service step is missing in the article

$ sbin/hadoop-daemon.sh start namenode

Hi ,

When I am running bin/hadoop namenode -format

I am getting following error:

hduser@ubuntu:~/lab/install/hadoop-2.0.4-alpha$ bin/hadoop namenode -format

bin/hadoop: line 26: /home/hduser/lab/install/hadoop-2.0.4-alpha/bin/../libexec/hadoop-config.sh: No such file or directory

DEPRECATED: Use of this script to execute hdfs command is deprecated.

Instead use the hdfs command for it.

/home/hduser/lab/install/hadoop-2.0.4-alpha/bin/hdfs: line 34: /home/hduser/lab/install/hadoop-2.0.4-alpha/bin/../libexec/hdfs-config.sh: No such file or directory

/home/hduser/lab/install/hadoop-2.0.4-alpha/bin/hdfs: line 150: cygpath: command not found

/home/hduser/lab/install/hadoop-2.0.4-alpha/bin/hdfs: line 191: exec: : not found

Pingback: » Hadoop 2.1.0 beta and Raspberry Pi Y12 Studio

First of thanks a lot Rasesh for wonderful tutorial. With the help of it, I configured Hadoop 2.1.0 Beta version in first shot. All components are up and running.

nayan@nayan-desktop:~/dev$ jps

11426

1546 ResourceManager

2038 JobHistoryServer

2100 Jps

1365 DataNode

1275 NameNode

1792 NodeManager

But when I try to create a directory, it is failing.

nayan@nayan-desktop:~/dev$ hdfs dfs -mkdir /home/nayan/hdfs/sample

13/08/30 17:07:03 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform… using builtin-java classes where applicable

mkdir: `/home/nayan/hdfs/sample’: No such file or directory

Can you please tell what I am doing wrong?

Thanks,

Nayan

Hi friends

its installation methods very understating on hadoop-2.1.0-beta

thank you

Great tutorial, thanks for sharing.

As of Hadoop 2.2.0 it looks like two things need to change in the yarn-site.xml configuration file (mapreduce.shuffle is now mapreduce_shuffle). Mine looks like this now:

yarn.nodemanager.aux-services

mapreduce_shuffle

yarn.nodemanager.aux-services.mapreduce_shuffle.class

org.apache.hadoop.mapred.ShuffleHandler

if I don’t do this, I see the following error:

2013-10-14 22:26:21,228 FATAL org.apache.hadoop.yarn.server.nodemanager.NodeManager: Error starting NodeManager

java.lang.IllegalArgumentException: The ServiceName: mapreduce.shuffle set in yarn.nodemanager.aux-services is invalid.The valid service name should only contain a-zA-Z0-9_ and can not start with numbers

urg, my copy/paste was botched up but hopefully this still makes sense. The value mapreduce.shuffle is now mapreduce_shuffle and the name yarn.nodemanager.aux-services.mapreduce.shuffle.class is now yarn.nodemanager.aux-services.mapreduce_shuffle.class

Thanks Joe very much. It will help others…

Thanks for this, had the exact same error and this solved it for me!

Thanks, that helped a lot!

headache saver, thanks!!

Nice work, Rasesh. This is one of the better, simpler getting started tutorials I’ve seen. Kudos.

Thank you… Happy to help!!

Good Writeup..thanks..but i have one problem..everything works fine except that jobs dont show up on localhost:8088. The job list is empty…and when i submit any job the log shows it is using LocalJobRunner..any idea where i could be going wrong..

Thanks in advance

Pingback: HowTo: Setup Hadoop 2.X on Linux-Ubuntu (Single Node) | techo byte

Pingback: Hadoop 2 Nodemanager not startingQueryBy | QueryBy, ejjuit, query, query by, queryby.com, android doubt, ios question, sql query, sqlite query, nodejsquery, dns query, update query, insert query, kony, mobilesecurity, postquery, queryposts.com, sapquery,

It was nice article it was very useful for me as well as useful foronline Hadoop training learners. thanks for providing this valuable information.

Pingback: What hadoop can and can’t do. | 做人要豁達大道

Hi Rasesh

Thanks for this wonderful tutorial.. It was really very helpful. I did all setup and when I am trying to run a job I am getting below error. I am not sure what is an issue. Can you help me finding the possible reason?

13/11/04 15:54:59 INFO Configuration.deprecation: mapreduce.reduce.class is deprecated. Instead, use mapreduce.job.reduce.class

13/11/04 15:54:59 INFO Configuration.deprecation: mapred.input.dir is deprecated. Instead, use mapreduce.input.fileinputformat.inputdir

13/11/04 15:54:59 INFO Configuration.deprecation: mapred.output.dir is deprecated. Instead, use mapreduce.output.fileoutputformat.outputdir

13/11/04 15:54:59 INFO Configuration.deprecation: mapred.map.tasks is deprecated. Instead, use mapreduce.job.maps

13/11/04 15:54:59 INFO Configuration.deprecation: mapred.output.key.class is deprecated. Instead, use mapreduce.job.output.key.class

13/11/04 15:54:59 INFO Configuration.deprecation: mapred.working.dir is deprecated. Instead, use mapreduce.job.working.dir

13/11/04 15:54:59 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1383608961434_0003

13/11/04 15:54:59 INFO impl.YarnClientImpl: Submitted application application_1383608961434_0003 to ResourceManager at /0.0.0.0:8032

13/11/04 15:54:59 INFO mapreduce.Job: The url to track the job: http://Office-Mac-2.local:8088/proxy/application_1383608961434_0003/

13/11/04 15:54:59 INFO mapreduce.Job: Running job: job_1383608961434_0003

13/11/04 15:55:02 INFO mapreduce.Job: Job job_1383608961434_0003 running in uber mode : false

13/11/04 15:55:02 INFO mapreduce.Job: map 0% reduce 0%

13/11/04 15:55:02 INFO mapreduce.Job: Job job_1383608961434_0003 failed with state FAILED due to: Application application_1383608961434_0003 failed 2 times due to AM Container for appattempt_1383608961434_0003_000002 exited with exitCode: 127 due to: Exception from container-launch:

org.apache.hadoop.util.Shell$ExitCodeException:

at org.apache.hadoop.util.Shell.runCommand(Shell.java:464)

at org.apache.hadoop.util.Shell.run(Shell.java:379)

at org.apache.hadoop.util.Shell$ShellCommandExecutor.execute(Shell.java:589)

at org.apache.hadoop.yarn.server.nodemanager.DefaultContainerExecutor.launchContainer(DefaultContainerExecutor.java:195)

at org.apache.hadoop.yarn.server.nodemanager.containermanager.launcher.ContainerLaunch.call(ContainerLaunch.java:283)

at org.apache.hadoop.yarn.server.nodemanager.containermanager.launcher.ContainerLaunch.call(ContainerLaunch.java:79)

at java.util.concurrent.FutureTask.run(FutureTask.java:262)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1145)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:615)

at java.lang.Thread.run(Thread.java:744)

Hi Vivek,

Did you manage to solve this? Same problem here..

Lars

Hi Lars,

I found a problem in one of the hadoop environment variables set in .bash_profile file. I am not sure if its the same case for you. for reference I am giving the content of my file. Hope it will help

export JAVA_HOME=$(/usr/libexec/java_home)

export HADOOP_MAPRED_HOME=/Volumes/MacNewHD/Hadoop/hadoop-2.2.0/

export HADOOP_COMMON_HOME=/Volumes/MacNewHD/Hadoop/hadoop-2.2.0/

export HADOOP_HDFS_HOME=/Volumes/MacNewHD/Hadoop/hadoop-2.2.0/

export YARN_HOME=/Volumes/MacNewHD/Hadoop/hadoop-2.2.0/

export HADOOP_YARN_HOME=/Volumes/MacNewHD/Hadoop/hadoop-2.2.0/

export HADOOP_CONF_DIR=/Volumes/MacNewHD/Hadoop/hadoop-2.2.0/etc/hadoop/

export YARN_CONF_DIR=$HADOOP_CONF_DIR

export PATH=/opt/subversion/bin:/usr/local/bin:/usr/bin:/bin:/usr/sbin:/sbin:/usr/local/binexport:$JAVA_HOME/bin:

Thanks Vivek! I solved it! I had some leftover configurations from other tutorials, so after replacing my config files with the original ones and making the changes explained in this tutorial it was fixed.

Thanx a lot, this was super helpful!

One minor change is that the config for nodemanager in yarn-site.xml seems to have ‘_’ instead of ‘.’ in it:

yarn.nodemanager.aux-services

mapreduce_shuffle

NodeManager was failing to start without it.

Thanks Vishal for pointing that out. I guess it changed after I posted this. I have updated the post as you suggested.

Hi,

I am getting the following error while starting the nodemanager, due to this Nodemanager process killed. Should I need to configure any entry in etc/hosts file.

013-11-08 16:46:49,701 INFO org.apache.hadoop.yarn.client.RMProxy: Connecting to ResourceManager at /0.0.0.0:8031

2013-11-08 16:46:53,738 INFO org.apache.hadoop.ipc.Client: Retrying connect to server: 0.0.0.0/0.0.0.0:8031. Already tried 0 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=10, sleepTime=1 SECONDS)

2013-11-08 16:46:56,742 INFO org.apache.hadoop.ipc.Client: Retrying connect to server: 0.0.0.0/0.0.0.0:8031. Already tried 1 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=10, sleepTime=1 SECONDS)

2013-11-08 16:47:00,750 INFO org.apache.hadoop.ipc.Client: Retrying connect to server: 0.0.0.0/0.0.0.0:8031. Already tried 2 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=10, sleepTime=1 SECONDS)

2013-11-08 16:47:03,758 INFO org.apache.hadoop.ipc.Client: Retrying connect to server: 0.0.0.0/0.0.0.0:8031. Already tried 3 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=10, sleepTime=1 SECONDS)

2013-11-08 16:47:07,766 INFO org.apache.hadoop.ipc.Client: Retrying connect to server: 0.0.0.0/0.0.0.0:8031. Already tried 4 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=10, sleepTime=1 SECONDS)

2013-11-08 16:47:11,774 INFO org.apache.hadoop.ipc.Client: Retrying connect to server: 0.0.0.0/0.0.0.0:8031. Already tried 5 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=10, sleepTime=1 SECONDS)

2013-11-08 16:47:14,782 INFO org.apache.hadoop.ipc.Client: Retrying connect to server: 0.0.0.0/0.0.0.0:8031. Already tried 6 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=10, sleepTime=1 SECONDS)

2013-11-08 16:47:18,790 INFO org.apache.hadoop.ipc.Client: Retrying connect to server: 0.0.0.0/0.0.0.0:8031. Already tried 7 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=10, sleepTime=1 SECONDS)

2013-11-08 16:47:22,798 INFO org.apache.hadoop.ipc.Client: Retrying connect to server: 0.0.0.0/0.0.0.0:8031. Already tried 8 time(s); retry policy is RetryUpToMaximumCountWithFixedSleep(maxRetries=10, sleepTime=1 SECONDS)

2013-11-08 16:47:25,806 INFO org.apache.hadoop.ipc.Client: Retrying connect to server: 0.0.0.0/0.0.0.0:8031. Already tried 9 time(s); retry policy is RetryUpToMa

Thanks

Balan.P

Hey Balan,

I got exactly the same problem. Did you solve that?

Allen

I got this error when my resourcemanager was not running and I was trying to run mapreduce programs. First resourcemannager needs to be started.

Pingback: Yarn, installation | Here

Hi All,

Im getting the classNotFound issue in all the executions. i doubt Im missing something very basic(Hadoop 2.2 on windows cygwin).

I found comments mentioning to add -classpath “$(cygpath -pw “$CLASSPATH”)” in some place(Any idea where exactly??).

Thanks,

Niteesh

Great, very helpful, a very very very big thank you !

I am currently reading Hadoop in Action book and was unable to run hadoop on my ubuntu. But, after I followed your configurations I was able to run Hadoop successfully …. !

Happy to help!!

did you ever get this running on Windows? I’m stuck with that same problem you had: $ hadoop version

Error: Could not find or load main class org.apache.hadoop.util.VersionInfo

Add the following line in your .bashrc file in cygwin

export HADOOP_CLASSPATH=$(cygpath -pw $(hadoop classpath)):$HADOOP_CLASSPATH

This issue will be resolved

how can solve that is error

http://localhost:8088/proxy/application_1384700761740_0001/

HTTP ERROR 500

Problem accessing /proxy/application_1384700761740_0001/. Reason:

org.apache.hadoop.yarn.exceptions.ApplicationNotFoundException: Application with id ‘application_1384700761740_0001’ doesn’t exist in RM.

Caused by:

java.io.IOException: org.apache.hadoop.yarn.exceptions.ApplicationNotFoundException: Application with id ‘application_1384700761740_0001’ doesn’t exist in RM.

at org.apache.hadoop.yarn.server.webproxy.WebAppProxyServlet.doGet(WebAppProxyServlet.java:341)

at javax.servlet.http.HttpServlet.service(HttpServlet.java:707)

at javax.servlet.http.HttpServlet.service(HttpServlet.java:820)

at org.mortbay.jetty.servlet.ServletHolder.handle(ServletHolder.java:511)

at org.mortbay.jetty.servlet.ServletHandler$CachedChain.doFilter(ServletHandler.java:1221)

at com.google.inject.servlet.FilterChainInvocation.doFilter(FilterChainInvocation.java:66)

at com.sun.jersey.spi.container.servlet.ServletContainer.doFilter(ServletContainer.java:900)

at com.sun.jersey.spi.container.servlet.ServletContainer.doFilter(ServletContainer.java:834)

at com.sun.jersey.spi.container.servlet.ServletContainer.doFilter(ServletContainer.java:795)

at com.google.inject.servlet.FilterDefinition.doFilter(FilterDefinition.java:163)

at com.google.inject.servlet.FilterChainInvocation.doFilter(FilterChainInvocation.java:58)

at com.google.inject.servlet.ManagedFilterPipeline.dispatch(ManagedFilterPipeline.java:118)

at com.google.inject.servlet.GuiceFilter.doFilter(GuiceFilter.java:113)

at org.mortbay.jetty.servlet.ServletHandler$CachedChain.doFilter(ServletHandler.java:1212)

at org.apache.hadoop.http.lib.StaticUserWebFilter$StaticUserFilter.doFilter(StaticUserWebFilter.java:109)

at org.mortbay.jetty.servlet.ServletHandler$CachedChain.doFilter(ServletHandler.java:1212)

at org.apache.hadoop.http.HttpServer$QuotingInputFilter.doFilter(HttpServer.java:1081)

at org.mortbay.jetty.servlet.ServletHandler$CachedChain.doFilter(ServletHandler.java:1212)

at org.apache.hadoop.http.NoCacheFilter.doFilter(NoCacheFilter.java:45)

at org.mortbay.jetty.servlet.ServletHandler$CachedChain.doFilter(ServletHandler.java:1212)

at org.apache.hadoop.http.NoCacheFilter.doFilter(NoCacheFilter.java:45)

at org.mortbay.jetty.servlet.ServletHandler$CachedChain.doFilter(ServletHandler.java:1212)

at org.mortbay.jetty.servlet.ServletHandler.handle(ServletHandler.java:399)

at org.mortbay.jetty.security.SecurityHandler.handle(SecurityHandler.java:216)

at org.mortbay.jetty.servlet.SessionHandler.handle(SessionHandler.java:182)

at org.mortbay.jetty.handler.ContextHandler.handle(ContextHandler.java:766)

at org.mortbay.jetty.webapp.WebAppContext.handle(WebAppContext.java:450)

at org.mortbay.jetty.handler.ContextHandlerCollection.handle(ContextHandlerCollection.java:230)

at org.mortbay.jetty.handler.HandlerWrapper.handle(HandlerWrapper.java:152)

at org.mortbay.jetty.Server.handle(Server.java:326)

at org.mortbay.jetty.HttpConnection.handleRequest(HttpConnection.java:542)

at org.mortbay.jetty.HttpConnection$RequestHandler.headerComplete(HttpConnection.java:928)

at org.mortbay.jetty.HttpParser.parseNext(HttpParser.java:549)

at org.mortbay.jetty.HttpParser.parseAvailable(HttpParser.java:212)

at org.mortbay.jetty.HttpConnection.handle(HttpConnection.java:404)

at org.mortbay.io.nio.SelectChannelEndPoint.run(SelectChannelEndPoint.java:410)

at org.mortbay.thread.QueuedThreadPool$PoolThread.run(QueuedThreadPool.java:582)

Caused by: org.apache.hadoop.yarn.exceptions.ApplicationNotFoundException: Application with id ‘application_1384700761740_0001’ doesn’t exist in RM.

at org.apache.hadoop.yarn.server.resourcemanager.ClientRMService.getApplicationReport(ClientRMService.java:247)

at org.apache.hadoop.yarn.server.webproxy.AppReportFetcher.getApplicationReport(AppReportFetcher.java:90)

at org.apache.hadoop.yarn.server.webproxy.WebAppProxyServlet.getApplicationReport(WebAppProxyServlet.java:221)

at org.apache.hadoop.yarn.server.webproxy.WebAppProxyServlet.doGet(WebAppProxyServlet.java:276)

… 36 more

Caused by:

org.apache.hadoop.yarn.exceptions.ApplicationNotFoundException: Application with id ‘application_1384700761740_0001’ doesn’t exist in RM.

at org.apache.hadoop.yarn.server.resourcemanager.ClientRMService.getApplicationReport(ClientRMService.java:247)

at org.apache.hadoop.yarn.server.webproxy.AppReportFetcher.getApplicationReport(AppReportFetcher.java:90)

at org.apache.hadoop.yarn.server.webproxy.WebAppProxyServlet.getApplicationReport(WebAppProxyServlet.java:221)

at org.apache.hadoop.yarn.server.webproxy.WebAppProxyServlet.doGet(WebAppProxyServlet.java:276)

at javax.servlet.http.HttpServlet.service(HttpServlet.java:707)

at javax.servlet.http.HttpServlet.service(HttpServlet.java:820)

at org.mortbay.jetty.servlet.ServletHolder.handle(ServletHolder.java:511)

at org.mortbay.jetty.servlet.ServletHandler$CachedChain.doFilter(ServletHandler.java:1221)

at com.google.inject.servlet.FilterChainInvocation.doFilter(FilterChainInvocation.java:66)

at com.sun.jersey.spi.container.servlet.ServletContainer.doFilter(ServletContainer.java:900)

at com.sun.jersey.spi.container.servlet.ServletContainer.doFilter(ServletContainer.java:834)

at com.sun.jersey.spi.container.servlet.ServletContainer.doFilter(ServletContainer.java:795)

at com.google.inject.servlet.FilterDefinition.doFilter(FilterDefinition.java:163)

at com.google.inject.servlet.FilterChainInvocation.doFilter(FilterChainInvocation.java:58)

at com.google.inject.servlet.ManagedFilterPipeline.dispatch(ManagedFilterPipeline.java:118)

at com.google.inject.servlet.GuiceFilter.doFilter(GuiceFilter.java:113)

at org.mortbay.jetty.servlet.ServletHandler$CachedChain.doFilter(ServletHandler.java:1212)

at org.apache.hadoop.http.lib.StaticUserWebFilter$StaticUserFilter.doFilter(StaticUserWebFilter.java:109)

at org.mortbay.jetty.servlet.ServletHandler$CachedChain.doFilter(ServletHandler.java:1212)

at org.apache.hadoop.http.HttpServer$QuotingInputFilter.doFilter(HttpServer.java:1081)

at org.mortbay.jetty.servlet.ServletHandler$CachedChain.doFilter(ServletHandler.java:1212)

at org.apache.hadoop.http.NoCacheFilter.doFilter(NoCacheFilter.java:45)

at org.mortbay.jetty.servlet.ServletHandler$CachedChain.doFilter(ServletHandler.java:1212)

at org.apache.hadoop.http.NoCacheFilter.doFilter(NoCacheFilter.java:45)

at org.mortbay.jetty.servlet.ServletHandler$CachedChain.doFilter(ServletHandler.java:1212)

at org.mortbay.jetty.servlet.ServletHandler.handle(ServletHandler.java:399)

at org.mortbay.jetty.security.SecurityHandler.handle(SecurityHandler.java:216)

at org.mortbay.jetty.servlet.SessionHandler.handle(SessionHandler.java:182)

at org.mortbay.jetty.handler.ContextHandler.handle(ContextHandler.java:766)

at org.mortbay.jetty.webapp.WebAppContext.handle(WebAppContext.java:450)

at org.mortbay.jetty.handler.ContextHandlerCollection.handle(ContextHandlerCollection.java:230)

at org.mortbay.jetty.handler.HandlerWrapper.handle(HandlerWrapper.java:152)

at org.mortbay.jetty.Server.handle(Server.java:326)

at org.mortbay.jetty.HttpConnection.handleRequest(HttpConnection.java:542)

at org.mortbay.jetty.HttpConnection$RequestHandler.headerComplete(HttpConnection.java:928)

at org.mortbay.jetty.HttpParser.parseNext(HttpParser.java:549)

at org.mortbay.jetty.HttpParser.parseAvailable(HttpParser.java:212)

at org.mortbay.jetty.HttpConnection.handle(HttpConnection.java:404)

at org.mortbay.io.nio.SelectChannelEndPoint.run(SelectChannelEndPoint.java:410)

at org.mortbay.thread.QueuedThreadPool$PoolThread.run(QueuedThreadPool.java:582)

Hi,

I have set up Hadoop 2.2.0 on Windows7(64 bit) with Cygwin

I am able to start dfs and yarn

but now when trying to run Word Count it fails while launching container.

It’s something related to classpath issue.

As I am getting following error

java.lang.NoClassDefFoundError: org/apache/hadoop/service/CompositeService

and

Could not find the main class: org.apache.hadoop.mapreduce.v2.app.MRAppMaster. Program will exit.

I have set system level env. variables as follows

HADOOP_COMMON_HOME=c:\hadoop

HADOOP_CONF_DIR=c:\hadoop\etc\hadoop

HADOOP_HDFS_HOME=c:\hadoop

HADOOP_HOME=c:\hadoop

HADOOP_MAPRED_HOME=c:\hadoop

YARN_HOME=c:\hadoop

Hello Friends,

This was created when 2.0 was in alpha stage and since then there have been some changes in the configurations. Based on some comments and other sources, I have updated the steps (mostly change in one env. variable YARN_HOME to HADOOP_YARN_HOME). I hope this will help all of you.

hduser@999:/usr/local/hadoop/hadoop/sbin$ ./start-all.sh

This script is Deprecated. Instead use start-dfs.sh and start-yarn.sh

Error: Cannot find configuration directory: /user/local/hadoop/hadoop/conf

starting yarn daemons

Error: Cannot find configuration directory: /user/local/hadoop/hadoop/conf

An enormous round of applause, continue the great work.

standard textile mills

Thanks for a really simple and yet working instructions. Just followed these steps with 2.2.0 and got word count example working.

Hi, When i try to run the built-in pi program, i get below error.Earlier it was running successful with hduser. i tried both the users hduser as well as root but could not fix it.

Any help would be appreciated.

root@bigdata:/usr/local/hadoop# hadoop jar ./share/hadoop/mapreduce/hadoop-mapreduce-examples-2.2.0.jar pi 2 5

Number of Maps = 2

Samples per Map = 5

14/03/11 17:10:09 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform… using bui

ltin-java classes where applicable

java.net.ConnectException: Call From bigdata/127.0.1.1 to localhost:9000 failed on connection exception: java.net

.ConnectException: Connection refused; For more details see: http://wiki.apache.org/hadoop/ConnectionRefused

at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:57)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:532)

at org.apache.hadoop.net.NetUtils.wrapWithMessage(NetUtils.java:783)

at org.apache.hadoop.net.NetUtils.wrapException(NetUtils.java:730)

at org.apache.hadoop.ipc.Client.call(Client.java:1351)

at org.apache.hadoop.ipc.Client.call(Client.java:1300)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:206)

at sun.proxy.$Proxy9.getFileInfo(Unknown Source)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:57)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:616)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:186)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:102)

at sun.proxy.$Proxy9.getFileInfo(Unknown Source)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.getFileInfo(ClientNamenodeProtoco

lTranslatorPB.java:651)

at org.apache.hadoop.hdfs.DFSClient.getFileInfo(DFSClient.java:1679)

at org.apache.hadoop.hdfs.DistributedFileSystem$17.doCall(DistributedFileSystem.java:1106)

at org.apache.hadoop.hdfs.DistributedFileSystem$17.doCall(DistributedFileSystem.java:1102)

at org.apache.hadoop.fs.FileSystemLinkResolver.resolve(FileSystemLinkResolver.java:81)

at org.apache.hadoop.hdfs.DistributedFileSystem.getFileStatus(DistributedFileSystem.java:1102)

at org.apache.hadoop.fs.FileSystem.exists(FileSystem.java:1397)

at org.apache.hadoop.examples.QuasiMonteCarlo.estimatePi(QuasiMonteCarlo.java:278)

at org.apache.hadoop.examples.QuasiMonteCarlo.run(QuasiMonteCarlo.java:354)

at org.apache.hadoop.util.ToolRunner.run(ToolRunner.java:70)

at org.apache.hadoop.examples.QuasiMonteCarlo.main(QuasiMonteCarlo.java:363)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:57)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:616)

at org.apache.hadoop.util.ProgramDriver$ProgramDescription.invoke(ProgramDriver.java:72)

at org.apache.hadoop.util.ProgramDriver.run(ProgramDriver.java:144)

at org.apache.hadoop.examples.ExampleDriver.main(ExampleDriver.java:74)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:57)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:616)

at org.apache.hadoop.util.RunJar.main(RunJar.java:212)

Caused by: java.net.ConnectException: Connection refused

at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method)

at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:597)

at org.apache.hadoop.net.SocketIOWithTimeout.connect(SocketIOWithTimeout.java:206)

at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:529)

at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:493)

at org.apache.hadoop.ipc.Client$Connection.setupConnection(Client.java:547)

at org.apache.hadoop.ipc.Client$Connection.setupIOstreams(Client.java:642)

at org.apache.hadoop.ipc.Client$Connection.access$2600(Client.java:314)

at org.apache.hadoop.ipc.Client.getConnection(Client.java:1399)

at org.apache.hadoop.ipc.Client.call(Client.java:1318)

… 33 more

Did you get fix for this problem ? I am also hitting similar iissue

Did you managed to solve this error…..

Pingback: hadoopy yarn | My Tech Notes

Pingback: hadoop yarn 2.x single cluster | My Tech Notes

Thanks Rasesh Mori…:)

It is very helpful and easy to understand!!!!

Hi, when i used hadoop version 1 I put all my libraries in lib folder, but in hadoop2 version there is only native folder. Every one knows where i must put my libs for using this libs in my apps?

Thanks yours responds .

Hi Raresh,

great tutorial. I have one problem though. When I try to start the namenode I get this message:

starting namenode, logging to /home/hduser/yarn/hadoop-2.4.0/logs/hadoop-root-namenode-iti-313.out

Java HotSpot(TM) 64-Bit Server VM warning: You have loaded library /home/hduser/yarn/hadoop-2.4.0/lib/native/libhadoop.so.1.0.0 which might have disabled stack guard. The VM will try to fix the stack guard now.

It’s highly recommended that you fix the library with ‘execstack -c ‘, or link it with ‘-z noexecstack’.

Does anyone knows what is it about?

excellent.. works like charm… can you also tell me how to compile the jar from an .java file that I created and then execute the hadoop job?

Pingback: freestylerock

Pingback: Setting Up an R-Hadoop System: Step-by-Step Guide | Big D8TA

Hi Rasesimori,

Thanks so much for your efforts in stpep by step guide, which helped me like anything.

I followed many step by step guides which still needs to have clarity. Even apache hadoop website also not providing much information to start work on simple example.

But You have done a great job. I setted up environment and executed first application of hadoop.

Thanks for your help and Keep posting useful information..

Thanks for sharing this post. it would help many. I have setup a few single & multi node Hadoop 1.2.1 clusters on both Ubuntu 14.04 & Solaris 11 operating systems. Follow link http://hashprompt.blogspot.in/search/label/Hadoop

Hi,

I am getting following error-

14/06/16 15:20:27 INFO mapreduce.Job: Job job_1401348657033_0013 running in uber mode : false

14/06/16 15:20:27 INFO mapreduce.Job: map 0% reduce 0%

14/06/16 15:20:27 INFO mapreduce.Job: Job job_1401348657033_0013 failed with state FAILED due to: Application application_1401348657033_0013 failed 2 times due to AM Container for appattempt_1401348657033_0013_000002 exited with exitCode: 1 due to: Exception from container-launch:

org.apache.hadoop.util.Shell$ExitCodeException:

at org.apache.hadoop.util.Shell.runCommand(Shell.java:464)

at org.apache.hadoop.util.Shell.run(Shell.java:379)

at org.apache.hadoop.util.Shell$ShellCommandExecutor.execute(Shell.java:589)

at org.apache.hadoop.yarn.server.nodemanager.DefaultContainerExecutor.launchContainer(DefaultContainerExecutor.java:195)

at org.apache.hadoop.yarn.server.nodemanager.containermanager.launcher.ContainerLaunch.call(ContainerLaunch.java:283)

at org.apache.hadoop.yarn.server.nodemanager.containermanager.launcher.ContainerLaunch.call(ContainerLaunch.java:79)

at java.util.concurrent.FutureTask.run(FutureTask.java:262)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1145)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:615)

at java.lang.Thread.run(Thread.java:744)

.Failing this attempt.. Failing the application.

14/06/16 15:20:27 INFO mapreduce.Job: Counters: 0

Can Some one help ?????

Many Thanks,

~Kedar

Hi,

I went through your post and its seemlessly very good, can you please post a similar installation tutorial for windows environment with hadoop vanilla flavour.

Thank You.

Pavan Kumar.

Pingback: Setting Up an R-Hadoop System and Predicting Future Website Visitors: Step-by-Step Guide |

Hello,

I am trying to install Hadoop Yarn single-node cluster, but It failed.

Now I would like to remove all related created directories and retry installation. But I don’t know how.

Could you help me please?

Besides, I have been stuck at step 4, to add more properties and I don’t know why, either 😦

Help me please!

Thanks a lot in advance!

Hi Rasesh Mori,

Thanks for your tutorial, i installed successful hadoop with a single node. Now, I have a mistake, i hope you can help me!

I run map reduce programme with the data appromately 6Gb and the result:

java.io.IOException: No space left on device

You can give me some advives or solution for the problem

Thank you so much!

Thang

Great tutorial but your configuration is wrong.

It took me some googling to fix it.

My datanode didnt start because I copied your settings and you set

dfs.datanode.data.dir property rather than

dfs.datanode.name.dir property as you had for the namenode

under the etc/hadoop/hdfs-site.xml file.

Have same problem. But I am not able to understand how you fixed it exactly.

Nice post thanks for sharing. If any one need Hadoop Interview Questions & Answers and Free Material Click Here

Pingback: Hadoop Nodemanager and Resourcemanager not starting - TecHub

Pingback: Building R + Hadoop system | Mathminers

Pingback: R-Hadoop 시스템 구축 가이드 | Encaion

Hey Rasesh, Nice Post!! Btw Do you know how to connect hive 2 with php using jdbc?? I am stuck on that I have done it in java but not able to do it in PHP.

Pingback: Step-by-Step Guide to Setting Up an R-Hadoop System | Rhadoop

This guide is perfect.. Installed like a peach!!.. I used it install 2.7.2 version… TYSM:):)

Pingback: Introdução ao Hadoop + Instalando Hadoop de forma Distribuída – Here Is Mari

You can refer to the below url they are providing very good free videos on hadoop:

For free videos from previous sessions refer:

http://hadoopbigdatatutorial.com/hadoop-training/big-data-tutorial

Pharmacy Yakima Wa Pharmacy Cvs Pharmacy Education Pharmacy License Lookup Pharmacy Tech Salary Pharmacy Journals Pharmacy Intern Pharmacy Programs Pharmacy Memes

hi!

Pingback: hadoop - Hadoop Nodemanager et Resourcemanager pas de départ

Pingback: bigdata - Hadoop Nodemanager e Resourcemanager non di partenza